AI & Automation in Marketing

AI-Powered vs. Rules-Based Negative Keyword Tools: A Feature-by-Feature Comparison Guide

The Google Ads industry generates over $300 billion annually, yet the average advertiser wastes 15-30% of their budget on irrelevant clicks.

The Automation Divide: Why Your Choice of Negative Keyword Tool Matters More Than Ever

The Google Ads industry generates over $300 billion annually, yet the average advertiser wastes 15-30% of their budget on irrelevant clicks. For PPC agencies managing multiple client accounts, this waste translates to thousands of dollars lost each month and dozens of hours spent manually reviewing search term reports. The solution seems simple: automate negative keyword management. But the type of automation you choose determines whether you save time and money or create new problems.

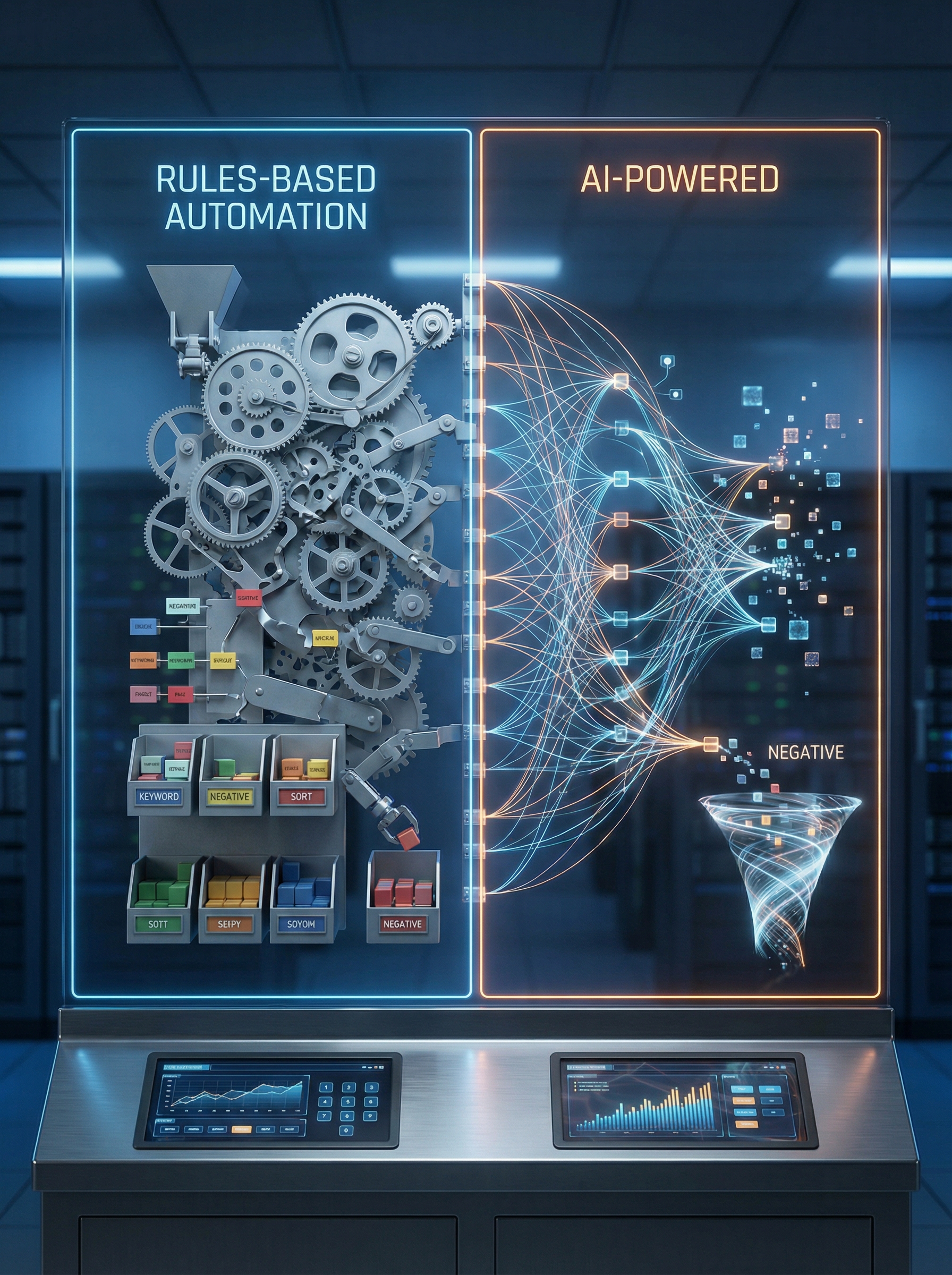

Two distinct approaches dominate the negative keyword automation landscape: rules-based systems and AI-powered platforms. Rules-based tools execute predefined instructions you set manually, such as "exclude any search term containing 'free' or 'cheap.'" AI-powered tools like Negator use natural language processing and contextual analysis to understand business context before making suggestions. According to industry research on AI versus traditional PPC automation, businesses using advanced automation often see 40-60% better results compared to those relying on manual management or basic tools.

The stakes are high. Choose the wrong approach and you risk either blocking valuable traffic or continuing to waste budget on irrelevant searches. This guide provides a detailed feature-by-feature comparison to help you understand which type of negative keyword tool fits your needs, budget, and operational complexity.

Core Technology: Rules vs. Context

Rules-based negative keyword tools operate on explicit instructions you create. You define conditions like "if search term contains X, add as negative" or "if cost per conversion exceeds Y, exclude." These systems excel at executing consistent, repeatable actions across campaigns. They work well when you know exactly which terms to block and can articulate clear criteria.

The limitation becomes apparent with nuance. A search term containing "cheap" might be irrelevant for a luxury brand but perfectly appropriate for a budget retailer. Rules-based systems cannot distinguish context without you creating dozens of exceptions and sub-rules, which quickly becomes unmanageable at scale. Research on AI versus manual negative keyword creation shows that manual rule creation becomes exponentially more complex as account size grows.

AI-powered platforms take a fundamentally different approach. These tools analyze search terms in the context of your business profile, active keywords, campaign goals, and historical performance. Negator, for example, uses NLP to understand semantic meaning rather than just matching character strings. The system recognizes that "affordable luxury watches" and "cheap knockoff watches" both contain budget-related language but represent entirely different intent levels.

According to research on machine learning for brand protection and traffic sculpting, AI systems learn from engagement data and predict which types of content are likely to yield better ad performance. This contextual intelligence means fewer false positives and more accurate exclusion recommendations.

Setup Complexity and Time Investment

Rules-based tools typically require significant upfront configuration. You must anticipate which terms to exclude, create comprehensive rule sets, and establish thresholds for automated actions. For a single campaign, this might take 2-3 hours. For an agency managing 50+ client accounts with different industries, goals, and budgets, initial setup can require 100+ hours of strategic planning and rule creation.

Ongoing maintenance adds to the burden. As campaigns evolve, you need to update rules, add exceptions, and adjust thresholds. Search behavior changes seasonally and competitively, requiring regular rule audits to ensure your system remains effective. Many agencies find themselves spending 5-10 hours per week maintaining rule-based systems across their client portfolio.

AI-powered platforms streamline setup dramatically. Negator requires only basic information: your business profile, current keyword lists, and campaign objectives. The system uses this context to begin making intelligent suggestions immediately. Setup for a single account takes 15-30 minutes. For agencies using MCC integration, bulk onboarding can process dozens of accounts in the time it takes to configure a single rules-based system.

The time savings compound over time. While rules-based systems require constant tuning, AI platforms improve autonomously as they process more data. Agencies using Negator report saving 10+ hours per week that previously went to manual search term review and rule maintenance. This efficiency gain allows PPC managers to focus on strategy and optimization rather than operational tasks.

Accuracy and Precision: False Positives vs. Smart Exclusions

The most critical difference between tool types emerges in classification accuracy. False positives occur when a system flags valuable search terms as irrelevant, potentially blocking qualified traffic. False negatives happen when irrelevant terms slip through, continuing to waste budget. Rules-based systems struggle with both because they lack contextual understanding.

Consider a real example. A rules-based system with a rule to exclude "how to" search terms would block "how to choose enterprise software" for a B2B software company, even though this represents high-value educational intent from decision-makers early in the research phase. The system follows instructions without understanding business context or buyer journey stages.

AI-powered platforms address this through contextual analysis and protective features. Negator includes a "protected keywords" function that prevents accidentally blocking valuable traffic patterns. You can designate terms that should never be excluded, and the AI respects these boundaries while still analyzing surrounding context. Best practices for AI-powered negative keyword discovery emphasize the importance of combining automation with strategic guardrails.

The precision improvement is measurable. According to 2025 Google Ads industry benchmarks, conversion rates improved 6.84% across industries as marketers adapted to AI-powered campaign automation. Advertisers using context-aware AI see better qualified traffic because the system understands not just what words appear in a search, but what those words mean for a specific business.

Scalability: Managing One Account vs. Fifty

For advertisers managing a single Google Ads account with moderate complexity, both approaches can work reasonably well. A rules-based system with carefully crafted rules might adequately handle 5-10 campaigns once properly configured. The manual oversight required remains manageable at this scale.

Agency reality is far more complex. Managing 20, 30, or 50+ client accounts across diverse industries creates exponential complexity. Each client has unique business context, competitive landscapes, seasonal patterns, and budget constraints. Creating and maintaining separate rule sets for each client becomes practically impossible. Strategies for scaling negative keyword management emphasize the need for automation that understands context rather than just executing rules.

AI-powered platforms built for agency use integrate with Google Ads MCC (My Client Center) structures, allowing you to manage multiple accounts from a centralized dashboard. Negator's MCC integration means you can review negative keyword suggestions across all clients simultaneously, approve changes in bulk where appropriate, and maintain individual customization where needed. This architecture is purpose-built for scale.

Consistency across accounts is another scaling advantage. With rules-based systems, each account manager might implement different rule logic, creating inconsistent optimization quality across the agency's portfolio. AI platforms apply the same sophisticated analysis methodology to every account while respecting individual business context, ensuring consistent excellence regardless of which team member manages the account.

Learning and Adaptation Over Time

Rules-based systems are fundamentally static. They execute the logic you programmed until you manually update them. If search behavior shifts, competitive landscapes change, or new irrelevant traffic patterns emerge, your rules remain unchanged until someone notices the problem and updates the configuration. This reactive approach means you continue wasting budget on new irrelevant terms until the next manual audit.

Seasonal changes illustrate this limitation clearly. A retail client's negative keyword needs differ dramatically between January and November. Search intent, competitive intensity, and traffic patterns shift. Rules-based systems require manual seasonal adjustments, creating operational overhead and potential for human error if updates are forgotten or delayed.

AI-powered systems evolve continuously. As Negator processes more search terms for your account, it learns patterns specific to your business. The system identifies which types of queries convert, which waste budget, and which fall into gray areas requiring human judgment. This learning happens automatically without requiring manual intervention or rule updates.

Advanced AI platforms also incorporate cross-account learning while maintaining data privacy. Patterns observed across multiple clients in similar industries inform the system's understanding of what typically represents valuable versus irrelevant traffic for specific business types. This collective intelligence improves suggestion accuracy faster than any single-account rule system could achieve.

Human Oversight and Control Mechanisms

A common concern about AI automation is loss of control. Many PPC managers worry that AI systems will make autonomous decisions that block valuable traffic or waste budget without human approval. This fear often drives agencies toward rules-based systems where every action is explicitly programmed. The concern is valid but based on a misunderstanding of how modern AI tools operate.

Properly designed AI-powered negative keyword tools use a suggestion model rather than full automation. Negator, for example, analyzes search terms and provides recommendations with confidence scoring. You review suggestions and approve which terms to add as negatives before any changes go live. This maintains human strategic oversight while eliminating the tedious manual analysis work.

The approval workflow can be customized based on your risk tolerance and operational preferences. You might auto-approve high-confidence suggestions for certain campaign types while requiring manual review for others. Agencies often implement tiered approval systems where junior team members handle routine suggestions and senior strategists review edge cases or high-spend accounts. Common myths about negative keyword automation often center on fears of losing control, but the reality is increased control through better data and smarter suggestions.

Transparency is another critical factor. Rules-based systems are transparent by nature because you programmed every action. Quality AI platforms provide similar transparency through explainable recommendations. Negator shows why each suggestion was made, which business context informed the classification, and what patterns triggered the recommendation. This transparency enables informed decision-making and builds confidence in the system.

Cost Considerations: Subscription vs. Savings

Software costs vary significantly between tool types. Rules-based automation tools often come bundled with broader PPC management platforms, with pricing ranging from $200-$1000+ monthly depending on feature sets and account volume. Standalone rules engines might start at $100-$300 monthly for basic functionality.

AI-powered specialized tools like Negator typically charge based on account volume and ad spend managed, with agency plans designed for multi-client management. While subscription costs might appear higher than basic rules tools, the relevant comparison is total cost including time investment and results generated.

The ROI calculation must include opportunity cost. If your agency spends 15 hours weekly on manual search term review and negative keyword management at an average fully-loaded cost of $75 per hour, that represents $58,500 annually in labor. A tool that reduces this to 3 hours weekly saves $46,800 in labor cost alone, before accounting for improved campaign performance.

Performance improvement provides additional ROI. Negator users typically see 20-35% ROAS improvement within the first month as irrelevant traffic is systematically eliminated and budget concentrates on qualified searches. For an agency managing $500,000 in monthly client ad spend at an average 400% ROAS, a 25% ROAS improvement generates an additional $500,000 in client revenue annually. Even a modest revenue share or performance bonus structure makes this financially compelling.

Integration with Existing Workflows

Modern PPC management involves multiple tools working together: bid management platforms, analytics systems, reporting dashboards, and communication tools. How a negative keyword tool integrates with your existing ecosystem significantly impacts operational efficiency.

Rules-based systems typically live within a single platform, requiring manual data export if you want to use insights elsewhere. If your negative keyword rules engine is part of your bid management platform but your reporting happens in a separate BI tool, connecting the data requires custom integration work or manual reporting processes.

AI-powered platforms increasingly offer API access and native integrations with popular agency tools. Negator connects directly to Google Ads via API, exports suggestions to CSV for review in your preferred spreadsheet tool, and provides reporting data that can be incorporated into client dashboards. This flexibility means the tool adapts to your workflow rather than forcing workflow changes around the tool.

For agencies, integration extends to team collaboration. The most effective AI platforms include multi-user access with permission controls, activity logging for accountability, and commenting features for team coordination on edge case decisions. These collaborative features are rarely found in rules-based systems, which tend to be configured once and then run autonomously.

When to Choose Each Approach: Specific Use Cases

Rules-based automation remains appropriate for specific scenarios. If you manage a single account with very stable traffic patterns and clearly defined exclusion criteria that rarely change, a rules system might suffice. Local service businesses with straightforward offerings and limited search complexity often fall into this category.

Simple, universal exclusions also work well with rules. Terms like "jobs," "careers," "salary," and "Wikipedia" are almost universally irrelevant for commercial advertisers. Creating rules to automatically exclude these obvious waste terms adds value without risk, regardless of business context.

AI-powered platforms excel when business context matters. E-commerce retailers with large product catalogs need tools that understand when "cheap" indicates budget-conscious buyers versus bargain hunters unlikely to convert. B2B companies with long sales cycles need systems that recognize educational intent versus irrelevant research. Professional services firms with premium positioning need automation that protects brand positioning while identifying qualified leads.

Agency scale is perhaps the clearest use case for AI. Managing 20+ client accounts across diverse industries with varying budgets and goals makes rules-based management practically impossible. The contextual intelligence and centralized management offered by AI platforms becomes not just beneficial but operationally necessary. According to research on AI in contextual advertising, AI-driven systems that understand context beyond simple keyword matching deliver significantly better performance in complex multi-account environments.

Special Considerations for Performance Max and Automated Campaigns

Google's Performance Max campaigns present unique negative keyword challenges. These automated campaign types provide limited search term visibility, making traditional manual review nearly impossible. According to 2025 Google Ads benchmarks, automated bidding has become the industry standard, with Maximize Conversions and Target ROAS each capturing about 33% of ad spend.

Rules-based negative keyword tools struggle with Performance Max because they rely on search term report data that Google increasingly restricts. If you cannot see what search terms triggered your ads, you cannot create effective rules to exclude irrelevant patterns. This visibility restriction makes rules-based approaches less viable for modern automated campaign types.

AI-powered platforms maintain effectiveness through alternative data analysis. While search term visibility has decreased, AI systems can analyze conversion patterns, audience signals, and asset performance to infer traffic quality. Advanced platforms combine the limited search term data available with conversion quality signals to identify exclusion opportunities that rules-based systems miss entirely.

Account-level negative keyword lists become more important for Performance Max campaigns. AI tools can build sophisticated account-level exclusion lists based on patterns observed across campaign types, applying learnings from search campaigns with full visibility to Performance Max campaigns with limited visibility. This cross-campaign intelligence provides protection that rules-based approaches cannot match.

Reporting and Insight Generation

Rules-based systems typically provide execution reports: which terms were excluded, which rules triggered, and basic performance metrics. These reports answer "what happened" but rarely provide strategic insights about why certain patterns emerged or what they mean for campaign strategy.

AI-powered platforms generate richer insights because they understand context. Negator's reporting doesn't just show which terms were suggested for exclusion but identifies patterns across your account. You might learn that irrelevant traffic spikes on weekends, that certain product categories attract more waste than others, or that specific competitors trigger irrelevant searches through brand bidding.

For agencies, these insights improve client communication significantly. Rather than reporting "we added 150 negative keywords this month," you can explain "we identified a pattern of irrelevant mobile searches during evening hours that was wasting 12% of your budget, and we've implemented exclusions that redirected this budget to higher-performing desktop traffic during business hours." This strategic narrative demonstrates value far more effectively than execution metrics.

Insight generation also drives continuous improvement. AI platforms that identify trends across your account portfolio can inform strategic decisions about campaign structure, keyword expansion opportunities, and budget allocation. These strategic insights extend the value of negative keyword management beyond waste prevention to active optimization.

Making Your Decision: A Framework for Tool Selection

Start by assessing account volume and complexity. If you manage 1-3 accounts with straightforward offerings and stable traffic patterns, rules-based tools might suffice. If you manage 10+ accounts or even a few highly complex accounts with large product catalogs and diverse traffic patterns, AI-powered platforms deliver better ROI.

Evaluate team resources and expertise. Rules-based systems require team members who can create and maintain sophisticated rule logic. If your team lacks technical configuration expertise or is already stretched thin operationally, AI platforms that require minimal setup and maintenance make more sense. The 10+ hours weekly saved by agencies using AI automation can be reinvested in strategic initiatives or additional client capacity.

Consider budget scale. AI-powered negative keyword management becomes more cost-effective as ad spend increases. If you manage $100,000+ monthly in ad spend, even a 5% reduction in wasted clicks generates substantial savings that easily justify advanced tool costs. For smaller budgets, ensure any tool subscription is proportional to potential savings.

Think about growth trajectory. If your agency plans to scale from 10 to 30 clients over the next year, investing in AI-powered tools that scale efficiently makes strategic sense even if rules-based systems currently suffice. The operational infrastructure you build today determines how efficiently you can grow tomorrow.

Implementation Best Practices Regardless of Tool Choice

Regardless of which tool type you choose, establish performance baselines before implementation. Document current wasted spend, time spent on manual review, and key performance metrics. These baselines enable accurate ROI measurement and help identify what's working after implementation.

Implement gradually rather than deploying across all accounts simultaneously. Start with 2-3 pilot accounts representing different industries or complexity levels. Learn from initial implementation, refine your processes, and then scale to additional accounts. This staged approach minimizes risk and accelerates learning.

Establish regular review cadences appropriate to account spend and volatility. High-spend accounts might warrant daily suggestion reviews, while smaller stable accounts might need only weekly attention. Match your oversight intensity to risk and opportunity levels rather than applying uniform processes across all accounts.

Document decisions and rationale, especially for edge cases. When you approve or reject a suggestion that doesn't fit obvious patterns, note why you made that decision. This documentation builds institutional knowledge, helps train new team members, and provides context if similar situations emerge later. For AI systems, your decisions also help the platform learn your preferences and improve future suggestions.

The Future of Negative Keyword Automation

Negative keyword automation continues to evolve rapidly. Current AI systems analyze text-based search terms, but future platforms will incorporate image search analysis, voice search patterns, and cross-channel behavior signals. The contextual understanding that separates today's AI tools from rules-based systems will become even more sophisticated as natural language processing advances.

Predictive capabilities represent the next frontier. Rather than reacting to irrelevant searches after they waste budget, advanced AI will predict which new search terms are likely to emerge based on seasonal patterns, competitive changes, and trending topics. Proactive negative keyword suggestions will prevent waste before it occurs rather than cleaning it up after the fact.

Integration depth will increase across marketing technology stacks. Future negative keyword tools will connect with CRM systems to understand which search terms lead to long-term customer value versus one-time purchasers. This lifetime value context will enable more sophisticated exclusion decisions that optimize for true business impact rather than immediate conversion metrics.

The most likely evolution is hybrid systems combining the best of both approaches. AI handles contextual analysis and pattern recognition while allowing you to set strategic rules that override AI suggestions in specific circumstances. This combination leverages machine intelligence while maintaining human strategic control. See how Negator combines AI-powered classification with protected keywords and human oversight to deliver context-aware automation without sacrificing control.

Conclusion: Moving From Decision to Implementation

The choice between AI-powered and rules-based negative keyword tools ultimately depends on your operational scale, team resources, and complexity needs. Rules-based systems work for simple scenarios with stable patterns and limited account volume. AI-powered platforms deliver superior results for agencies, complex accounts, and anyone managing significant ad spend where contextual understanding makes the difference between blocking valuable traffic and eliminating waste.

The investment in sophisticated negative keyword management pays dividends through both time savings and performance improvement. Agencies report saving 10+ hours weekly while improving ROAS by 20-35% within the first month of implementing AI-powered tools. These gains compound over time as systems learn and teams redirect saved time toward strategic initiatives.

Your next steps should include auditing current negative keyword management processes to quantify time investment and wasted spend, evaluating 2-3 tools representing different approaches through trials or demos, and implementing a pilot program with clear success metrics before full deployment. The right tool eliminates one of PPC management's most tedious operational requirements while improving campaign performance, freeing your team to focus on strategy and growth rather than search term spreadsheet reviews.

AI-Powered vs. Rules-Based Negative Keyword Tools: A Feature-by-Feature Comparison Guide

Discover more about high-performance web design. Follow us on Twitter and Instagram