AI & Automation in Marketing

Real-Time vs. Batch Processing: Understanding How Negator Analyzes Search Terms Continuously

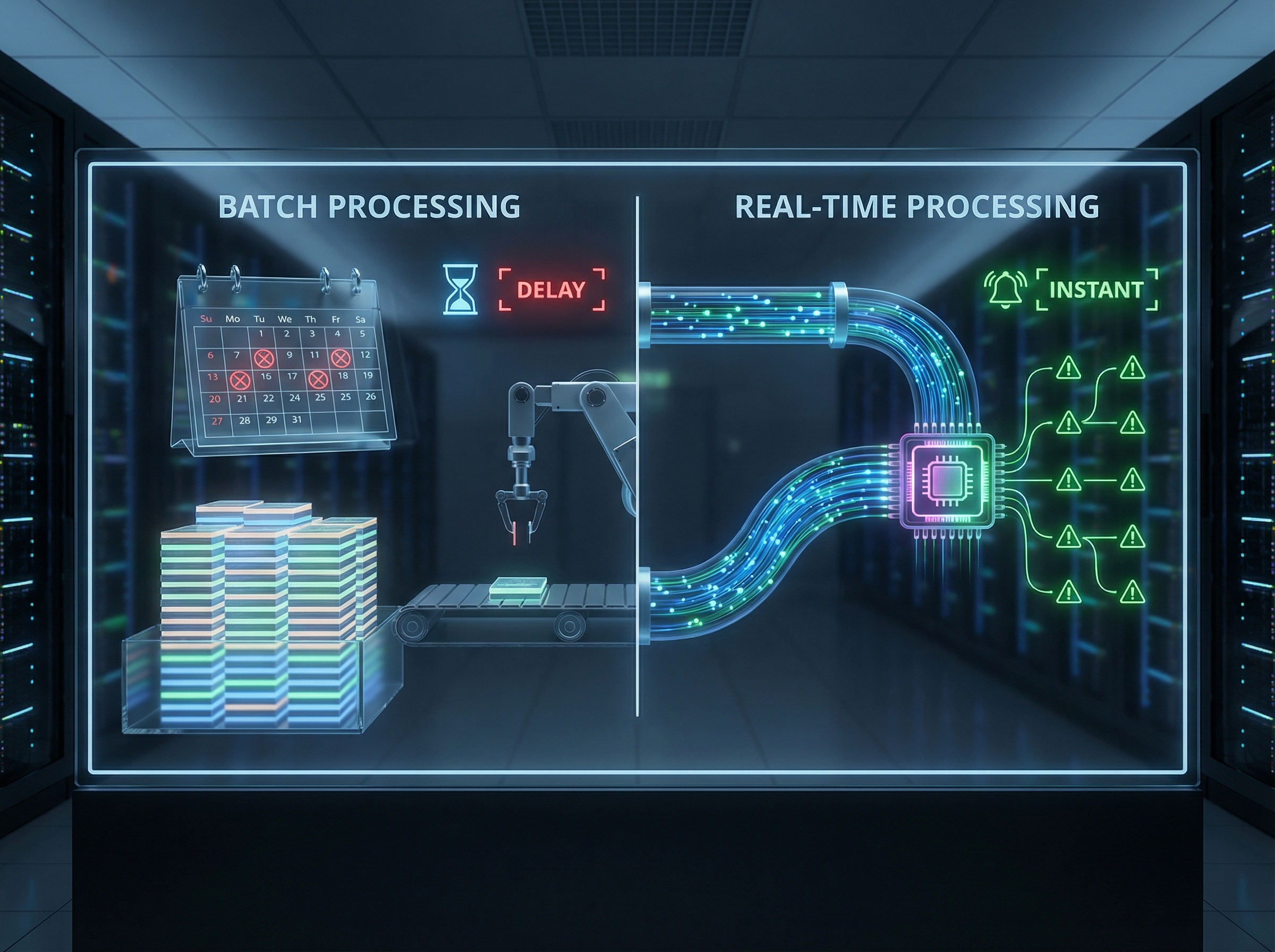

The way you process search term data directly impacts how quickly you can respond to wasted spend. While traditional PPC management relies on weekly or monthly reviews of search term reports, continuous processing analyzes queries in real-time, preventing waste rather than discovering it after the fact.

Why Continuous Search Term Analysis Matters in Modern PPC

The way you process search term data directly impacts how quickly you can respond to wasted spend. While traditional PPC management relies on weekly or monthly reviews of search term reports, this batch processing approach means irrelevant clicks accumulate for days or weeks before you can act. In the rapidly evolving data analytics landscape, organizations are increasingly replacing legacy batch processing with continuous data flows to enable faster decision-making. For PPC advertisers managing significant budgets, the difference between real-time and batch processing isn't just technical—it's the difference between preventing waste and discovering it after the fact.

Negator takes a fundamentally different approach to search term analysis. Rather than waiting for you to manually review reports on a fixed schedule, the platform analyzes search terms continuously as they occur in your campaigns. This real-time processing model means irrelevant queries are identified and flagged within hours of appearing, not weeks later. Understanding how this continuous analysis works—and why it outperforms traditional batch methods—helps you appreciate the speed and cost savings Negator delivers to your Google Ads management.

For agencies managing multiple client accounts, the processing method you choose becomes even more critical. Batch processing across 20-50 accounts creates bottlenecks where data piles up waiting for analysis. Continuous processing scales naturally, handling each account's search terms as they occur without creating manual review queues. This article breaks down the technical differences between these approaches and shows exactly how Negator's continuous analysis model protects your budget more effectively than traditional periodic reviews.

Understanding Batch Processing: The Traditional PPC Review Model

Batch processing collects data over a specific time period and analyzes it all at once during scheduled review sessions. In PPC management, this typically means downloading search term reports weekly or monthly, reviewing the accumulated queries, and then uploading negative keywords in bulk. According to data engineering best practices, batch processing is ideal for handling large volumes of data that don't require immediate processing and in cost-sensitive scenarios where scheduled processing is acceptable.

The typical batch workflow for search term management looks like this: search terms accumulate in Google Ads throughout the week, you set aside time on Friday afternoon to review the reports, you identify irrelevant queries and create negative keyword lists, and finally you upload those exclusions back to your campaigns. This approach works when budgets are small and irrelevant clicks cost only a few dollars per day. But as spend increases, the cost of waiting days or weeks to address wasted traffic compounds significantly.

The fundamental limitation of batch processing for PPC is that it's reactive rather than preventative. Every irrelevant click that occurs Monday through Thursday goes unbilled until your Friday review. If you manage 30 client accounts and can only dedicate 15 minutes per account during your weekly review session, you're allocating 7.5 hours to a task that still leaves 6 days of unprotected spend. For agencies, this creates a mathematical problem: the more accounts you manage, the less frequently you can review each one, and the more waste accumulates between review cycles.

Batch processing also creates workflow bottlenecks for teams. Search term reviews require focused attention—you need to understand campaign context, evaluate intent, and make judgment calls about borderline queries. When you batch this work into concentrated sessions, it becomes mentally taxing and error-prone. By hour six of reviewing search terms across multiple accounts, decision fatigue sets in. The queries you approve or reject at 4:00 PM may not receive the same careful consideration as those you reviewed at 10:00 AM. This inconsistency in analysis quality directly impacts campaign performance across your client roster.

Real-Time Processing: Continuous Analysis and Immediate Response

Real-time processing analyzes data immediately as it's generated, enabling instant responses to emerging patterns. Instead of accumulating search terms for periodic review, real-time systems evaluate each query as it occurs and flag issues within minutes or hours. Research shows that PPC automation processes massive amounts of data in seconds, making it possible to conduct instant bid and budget adjustments that outperform manual strategies.

The primary advantage of real-time processing for search term management is prevention rather than detection. When a new irrelevant query starts generating clicks in your campaign, continuous analysis identifies the pattern immediately and alerts you before significant waste occurs. Instead of discovering on Friday that Monday's irrelevant traffic cost $200, you receive an alert Tuesday morning when the pattern first emerges with only $40 in waste. The faster you identify and exclude irrelevant queries, the less budget you lose to them.

Real-time processing also scales more efficiently than batch methods. A continuous analysis system processes each account's search terms independently as they occur, without creating interdependencies or queues. Whether you manage 5 accounts or 50, the system responds to each one's search terms with the same speed. This eliminates the resource allocation problem that batch processing creates, where your time becomes the limiting factor in how quickly you can respond to wasted spend across your entire portfolio.

Continuous processing enables context-aware analysis that improves over time. Because the system evaluates search terms immediately, it can factor in current campaign performance, recent keyword additions, and evolving business priorities. A query that might be irrelevant in January could become valuable in March when you launch a new product line. Real-time systems adapt to these changes automatically, while batch systems may continue applying outdated rules until you manually update your review criteria during your next scheduled session.

How Negator's Continuous Analysis Works

Negator uses a continuous processing architecture that monitors your Google Ads accounts in real-time, analyzing new search terms as they appear in your campaigns. Through direct API integration, the platform receives search term data as Google makes it available—typically within hours of queries generating clicks. This immediate data access allows Negator to begin evaluation long before you would typically schedule a manual review session. The platform processes search terms individually rather than in batches, evaluating each query's relevance based on your active keywords, business profile, and campaign context.

The classification engine analyzes search terms using natural language processing and contextual evaluation. Unlike rule-based systems that apply rigid criteria, Negator understands nuance and intent. The platform examines how a search term relates to your target keywords, considers whether the query indicates buying intent or research behavior, evaluates if the searcher's needs align with your offerings, and applies your business context to determine relevance. This analysis happens automatically for every search term, creating a consistent evaluation standard across all your campaigns without requiring manual review time.

Negator's protected keywords feature demonstrates the importance of context-aware continuous processing. You can designate specific terms that should never be excluded, even if they appear in potentially irrelevant searches. The system monitors for these protected terms in real-time, preventing false positives that might otherwise be flagged as waste. This safeguard operates continuously, not just during scheduled review periods, ensuring valuable traffic remains protected even as new search term variations emerge throughout the month. You can learn more about AI automation safeguards in our detailed guide.

The platform generates recommendations continuously rather than on a fixed schedule. As search terms accumulate analysis, Negator surfaces patterns that warrant attention. You receive notifications when irrelevant queries reach cost thresholds you've defined, when new negative keyword opportunities emerge, or when search term trends indicate campaign drift. This proactive alerting transforms your workflow from scheduled defensive reviews to responsive optimization based on actual campaign events. Instead of asking "What waste happened last week?" you're answering "What patterns are emerging right now?"

The Speed Advantage: Preventing vs. Discovering Waste

The financial impact of processing speed becomes clear when you calculate accumulated waste. Consider a campaign spending $500 daily with 20% waste going to irrelevant queries. Under weekly batch processing, that's $100 per day in waste, or $700 before your next scheduled review. Real-time processing that identifies and flags the issue within 24 hours limits that waste to $100 before you can act. Over a year, the difference between 24-hour and 7-day response times is $26,000 in prevented waste for a single campaign. When you manage multiple accounts with similar waste patterns, the annual impact quickly reaches six figures.

Response speed becomes even more critical during high-spend periods. If you increase budgets for a seasonal promotion or product launch, any irrelevant traffic targeting mistakes are amplified proportionally. A 300% budget increase means waste accumulates three times faster. Batch processing during high-spend periods means you discover significant problems only after they've consumed substantial budget. Continuous analysis alerts you to emerging issues while budgets are elevated, allowing you to correct course before the waste compounds. This makes real-time processing particularly valuable for Q4 retail campaigns, seasonal services, and time-sensitive promotions where every day of unchecked waste represents a larger financial impact.

New campaign launches benefit dramatically from continuous processing. When you launch a campaign with broad match keywords or enter a new market, you lack historical data about which search terms will prove irrelevant. Batch processing means your first review happens days or weeks after launch, after you've already accumulated significant waste from the initial learning period. Continuous analysis provides feedback within hours of the first clicks, allowing you to course-correct during the critical early phase when waste is typically highest. This faster learning cycle helps new campaigns reach profitability more quickly and with less budget wasted on the discovery process.

Processing speed also impacts competitive positioning. Your competitors face the same batch processing limitations if they rely on manual reviews. The advertiser who identifies and excludes irrelevant traffic faster gains an efficiency advantage that compounds over time. Your saved budget can be reallocated to more valuable keywords, better ad positions, or expanded targeting—all while competitors continue spending on the same irrelevant queries you've already excluded. In competitive markets where ROAS differences of 5-10% determine profitability, the continuous processing advantage directly impacts your ability to outbid competitors while maintaining positive returns.

Scaling Search Term Analysis Across Multiple Accounts

Managing search terms across multiple accounts amplifies the limitations of batch processing. If you manage 30 client accounts and allocate 30 minutes per account for weekly search term review, you're dedicating 15 hours each week to this single task. As you add more clients, you face an impossible choice: reduce the time spent on each account (lowering analysis quality), extend the time between reviews (allowing more waste to accumulate), or add team members (increasing costs). None of these options scales efficiently, which is why agencies often report that search term management becomes overwhelming as they grow beyond 20-30 accounts.

Continuous processing solves the scaling problem by removing human review time as the limiting factor. Whether you manage 10 accounts or 100, the system analyzes search terms for each one independently and simultaneously. There's no queue where Account 30 waits for you to finish reviewing Accounts 1-29. Each account receives the same rapid analysis and alerting, regardless of portfolio size. This consistent service level becomes a competitive advantage for agencies—you can offer the same responsiveness to a small client spending $5,000 monthly as you do to a major account spending $100,000, without the latter subsidizing the time required for the former. For more insights on scaling across multiple accounts, explore our detailed implementation guide.

Consistency across accounts represents another scaling advantage of continuous processing. When human reviewers conduct batch analysis, subtle variations in judgment inevitably occur between accounts, between team members, and even within the same reviewer on different days. One account might receive more conservative exclusions while another gets more aggressive filtering, not due to strategic differences but simply due to when and how the review occurred. Continuous automated analysis applies consistent evaluation criteria across all accounts, eliminating this variability. Clients receive uniform optimization quality, reducing the risk that some accounts are systematically under-optimized due to inconsistent review practices.

Team coordination challenges also diminish with continuous processing. In batch workflows, you need clear processes for who reviews which accounts when, how to handle vacation coverage, and how to onboard new team members on review procedures. Client accounts may be neglected during team transitions or when the assigned manager is unavailable. Continuous systems operate independently of team structure—new clients can be added to automated analysis immediately upon setup, departing team members don't leave coverage gaps, and client service remains consistent regardless of internal staffing changes. This operational resilience becomes increasingly valuable as agencies scale and team complexity increases.

Accuracy Through Context: Why Continuous Processing Improves Over Time

Continuous processing enables learning and adaptation that batch systems cannot match. Because Negator analyzes search terms immediately and sees how campaigns respond to exclusions in real-time, the system develops increasingly accurate understanding of what works for your specific accounts. When a negative keyword is added based on a recommendation, the platform monitors how that exclusion impacts performance. If the exclusion improves efficiency without reducing valuable traffic, similar patterns receive higher priority in future recommendations. If an exclusion inadvertently blocks conversions, the system adjusts its criteria to prevent similar mistakes.

The timing of analysis impacts accuracy in subtle but important ways. When you review search terms in batch mode days or weeks after they occurred, you're evaluating queries outside their campaign context. You may not remember that Tuesday's traffic was affected by a budget change, Thursday's queries came during a competitive bid spike, or Friday's searches coincided with a promotional email send. Continuous processing evaluates search terms while campaign context is fresh and relevant, leading to more accurate classification. The system sees search terms in relation to concurrent campaign events, not as isolated data points stripped of temporal context.

Business context evolves continuously, and your search term evaluation criteria should evolve with it. When you launch a new product, enter a new market, or shift business priorities, the relevance of specific search terms changes. Continuous processing incorporates these changes immediately once you update your business profile or keyword lists. Batch systems, by contrast, continue applying outdated criteria until your next scheduled review session, during which you manually adjust your evaluation approach. The lag between business changes and optimization criteria updates can span weeks with batch processing, during which campaign targeting doesn't reflect current business reality.

Contextual nuance in search term evaluation requires sophisticated analysis that benefits from continuous data flow. Consider a search term like "affordable alternatives"—its relevance depends entirely on your positioning. For a premium brand, this query might indicate price-sensitive searchers unlikely to convert. For a value brand, the same query represents exactly your target customer. Negator's continuous analysis considers not just the query itself but your active keywords, conversion patterns, and business description to make context-aware recommendations. This nuanced evaluation, applied consistently across all search terms as they occur, delivers more accurate classification than rule-based batch filtering. Understanding how AI evaluates context helps you appreciate why continuous analysis outperforms manual review.

Hybrid Approaches: Combining Automation with Human Oversight

The most effective search term management combines continuous automated analysis with strategic human oversight. Negator doesn't make decisions for you—it continuously analyzes search terms and surfaces recommendations that you review and approve before implementation. This hybrid approach captures the speed advantages of real-time processing while maintaining human judgment for borderline cases and strategic decisions. According to industry best practices, modern businesses often need both real-time and batch methods, with platforms built for flexibility to handle both within a single pipeline framework.

The workflow combines continuous machine analysis with periodic human review. Negator processes search terms in real-time, flagging clear irrelevant queries and building confidence scores for each recommendation. You review these recommendations on your schedule—daily, weekly, or when the platform alerts you to significant patterns. This transforms your role from primary analyst to strategic reviewer. Instead of manually evaluating every search term, you focus on the subset that automated analysis flagged as potentially problematic, reviewing the platform's reasoning and either approving or overriding recommendations based on your business knowledge.

This division of labor dramatically improves efficiency. Automated continuous processing handles the high-volume, clear-cut cases where search terms are obviously irrelevant or clearly valuable. Human review focuses on the nuanced middle ground where business judgment and strategic considerations matter most. A search term that's technically relevant to your keywords but strategically wrong for your positioning requires human evaluation. A query that performed poorly in the past but might be worth retesting given campaign changes benefits from human strategic thinking. By allocating continuous processing to volume work and human review to strategic decisions, you complete search term management faster while improving quality.

The hybrid model also creates a feedback loop that improves both automated and human analysis over time. When you approve Negator's recommendations, the system learns which patterns align with your preferences. When you override recommendations, the platform adjusts its criteria to better match your judgment. This collaborative learning means the automated analysis becomes more accurate and requires less human review over time. Simultaneously, you develop better understanding of which search term patterns deserve attention and which can be handled automatically, making your review sessions faster and more focused. The combination improves continuously, unlike pure batch processing where analysis quality depends entirely on consistent human effort.

Practical Implementation: Moving from Batch to Continuous Processing

Transitioning from batch to continuous search term processing begins with assessing your current review frequency and coverage. Document how often you review search terms for each account, how much time each review requires, and how much waste accumulates between reviews. This baseline measurement helps you quantify the improvement opportunity. If you currently review accounts every two weeks and identify an average of $300 in wasted spend per review, you're looking at $600 in waste over a monthly cycle per account. Multiply this by your account count to understand the total budget at risk under your current batch approach.

Integration with existing workflows determines adoption success. Negator connects directly to your Google Ads accounts through API access, requiring no changes to your campaign structure or bidding strategies. The platform analyzes search terms in the background while your campaigns continue running normally. You can start by monitoring Negator's recommendations without implementing them, allowing you to compare automated analysis against your manual reviews. This parallel operation builds confidence in the platform's accuracy before you shift from manual batch reviews to continuous processing with periodic review of automated recommendations. For specific integration steps, review our guide on integrating Negator into your agency stack.

A phased rollout reduces risk and allows for learning. Start with 3-5 accounts that have clean historical data and consistent performance. Configure Negator's analysis for these accounts, set your protected keywords to prevent over-exclusion, and review the initial recommendations the platform generates. Compare these suggestions against what you would have identified in your next manual review. This validation phase typically takes 1-2 weeks and builds team confidence in the continuous processing approach. Once you're comfortable with recommendation quality, expand to additional accounts in groups of 10-15, applying lessons learned from the initial rollout.

Ongoing management of continuous processing requires different skills than batch review. Instead of dedicating blocks of time to search term analysis, you respond to alerts and patterns the platform surfaces. You might receive a notification that a specific campaign has generated unusual irrelevant traffic, prompting a 10-minute focused review rather than a 60-minute comprehensive audit. You review weekly summary reports showing prevented waste and implemented exclusions rather than manually combing through search term exports. This shift from scheduled defensive reviews to responsive optimization allows you to maintain coverage across more accounts with the same or less time investment, fundamentally changing how your team scales campaign management.

Measuring the Impact of Continuous Processing

The first metric to track is response time: how quickly do you identify and address irrelevant search terms under continuous processing versus batch methods? Measure the average days between when a search term first generates clicks and when you add it as a negative keyword. Under batch processing, this typically ranges from 7-30 days depending on your review frequency. With continuous analysis, you should see this drop to 1-3 days as you review and approve recommendations shortly after the platform flags them. The reduction in response time directly correlates with prevented waste, as each day earlier you exclude irrelevant terms is a day those terms don't consume budget.

Track prevented waste as your primary ROI metric. Negator reports weekly and monthly summaries of how much spend was avoided through exclusions the platform recommended. Compare this prevented waste figure to your platform costs to calculate direct ROI. Most agencies see that prevented waste exceeds platform costs by 10-20x within the first month, and this ratio improves as the system learns your preferences and applies more refined recommendations. Beyond direct platform ROI, compare overall campaign efficiency (cost per conversion, ROAS) before and after implementing continuous processing to capture the broader impact of cleaner traffic. Our guide on measuring automation ROI provides detailed frameworks for this analysis.

Coverage metrics reveal whether continuous processing improves your ability to maintain consistent optimization across all accounts. Measure what percentage of your managed accounts receive search term review in a given week under your current batch approach versus with continuous processing. You'll likely find that batch methods result in 60-80% weekly coverage due to time constraints and prioritization decisions, while continuous processing delivers 100% coverage since the platform monitors all connected accounts simultaneously. This improved coverage means smaller accounts and lower-spend campaigns receive the same optimization attention as your largest clients, improving portfolio-wide performance rather than just optimizing your top accounts.

Finally, track team efficiency gains through time allocation analysis. Measure how many hours your team spends on search term review and negative keyword management before and after implementing continuous processing. Most agencies report 10+ hours per week in time savings once continuous analysis replaces manual batch reviews. This freed capacity can be redirected to strategic work like campaign planning, creative development, or client consultation—higher-value activities that drive business growth rather than defensive maintenance. The efficiency gain becomes particularly visible when you add new clients, as continuous processing allows you to scale account management without proportionally scaling manual review time.

Conclusion: The Continuous Processing Advantage

The shift from batch to continuous processing represents a fundamental change in how you approach search term management. Rather than waiting for waste to accumulate and then discovering it during scheduled reviews, you prevent waste through immediate analysis and rapid response. This paradigm shift transforms search term optimization from a reactive cleanup task into a proactive protection mechanism. The faster you identify irrelevant traffic patterns, the less budget they consume, and the more efficient your campaigns become.

For agencies managing multiple accounts, continuous processing solves the scaling challenge that makes batch methods increasingly impractical as you grow. You cannot hire your way out of the manual review bottleneck—adding team members to conduct more batch reviews simply increases costs without fundamentally improving response times or coverage. Continuous automated analysis, by contrast, scales perfectly: the hundredth account receives the same rapid analysis as the first, with no incremental time investment required from your team. This scalability advantage becomes more valuable with every account you add.

The technical differences between batch and continuous processing directly translate to financial outcomes. Faster response times mean less accumulated waste. Better coverage means consistent optimization across all accounts, not just the ones you had time to review this week. Context-aware analysis means more accurate classification and fewer false positives that inadvertently block valuable traffic. Together, these advantages compound into significantly improved campaign efficiency and measurably better ROAS across your entire account portfolio.

Negator's continuous processing model delivers these advantages immediately upon implementation. The platform begins analyzing your search terms in real-time as soon as you connect your accounts, building recommendations while you continue managing campaigns normally. You don't need to change your campaign structure, adjust your bidding strategies, or modify your workflow—Negator operates in the background, surfacing opportunities for you to review and approve. If you're ready to move beyond batch processing and see how continuous analysis protects your clients' budgets more effectively, explore how Negator handles automated search term classification at scale.

Real-Time vs. Batch Processing: Understanding How Negator Analyzes Search Terms Continuously

Discover more about high-performance web design. Follow us on Twitter and Instagram